Local Citation work is unglamorous. It always has been. You’re chasing consistency across dozens of directories, fixing a phone number someone entered wrong three years ago, reconciling four slightly different versions of the same business name. The fundamentals haven’t shifted much — accuracy, consistency, trust — and honestly, they probably won’t.

What has changed is the toolkit available to people doing this work seriously.

LLMs have landed in the local SEO conversation, and the reactions tend toward extremes. Either people assume they’ll automate everything and break things, or they dismiss them as content-spinning nonsense with no real application. Neither framing is right. The practitioners actually getting value from these tools are using them in a much quieter way — for preparation, cleanup, and formatting — not for anything that touches a live directory.

The biggest myth worth killing early: LLMs are not a submission tool. They shouldn’t be logging into Yelp, creating Google Business profiles, or clicking “verify.” That’s a fast way to get listings flagged or suspended. Where they genuinely help is in everything that happens before a human sits down to submit, and in the quality control pass that comes after.

Think of the tool like a very fast, very patient copy editor with a strong preference for consistency. That’s the lane.

Getting the Data Ready

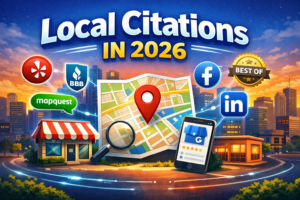

Clients send messy intake forms. That’s just reality. You’ll get a business name that changes between the email subject line and the attached document. You’ll get two phone numbers with no explanation of which is primary. The address will be formatted three different ways, sometimes within the same paragraph.

Feeding that raw intake directly into an LLM — without pre-editing it — and asking it to extract and structure the core fields is genuinely useful. Not because the model is doing anything magical, but because it’s fast and consistent at a task that’s tedious and error-prone when humans do it manually. Missing fields get flagged. Inconsistencies surface before they get baked into fifty directory listings.

At this point, nothing has been submitted anywhere. You’re just imposing order on chaos.

The Canonical NAP Is Everything

If there’s one step that determines how painful or painless a citation campaign will be six months from now, it’s this one. A single, locked, approved version of the business name, address, phone number, and website — agreed upon before anyone touches a directory.

This sounds obvious. It almost never gets done properly.

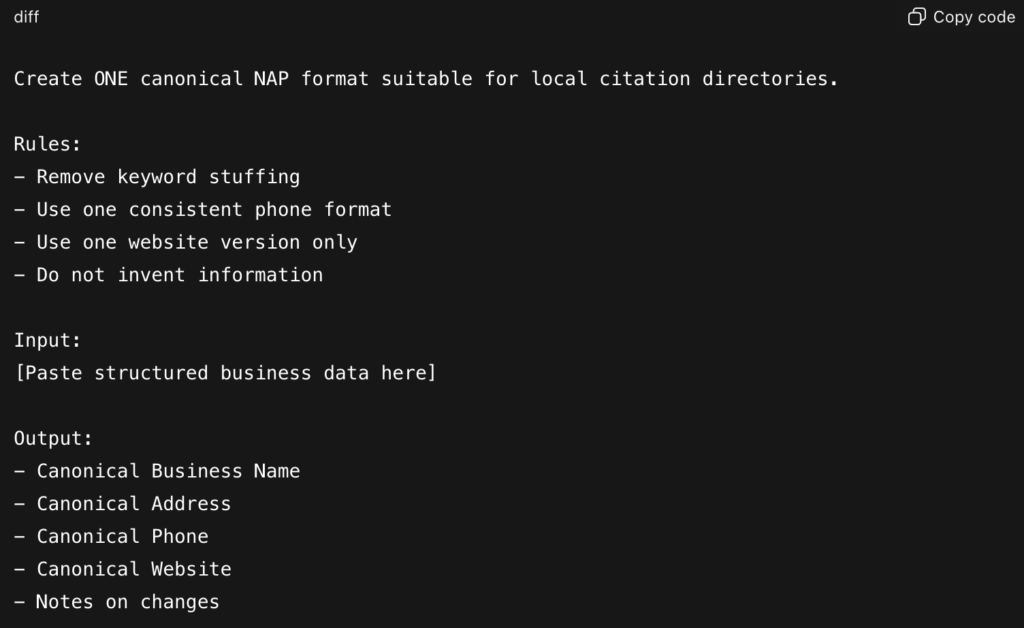

LLMs help here by catching the subtle stuff: keyword-stuffed business names that’ll get flagged, inconsistent suite number formatting, http vs. https, the trailing slash question on URLs. Small things that compound. You paste the structured data in, run a normalization pass, and come out the other side with something a human can review and officially approve.

That approval step matters. The output isn’t the canonical record — a human decision is.

Categories and Descriptions (The Underrated Part)

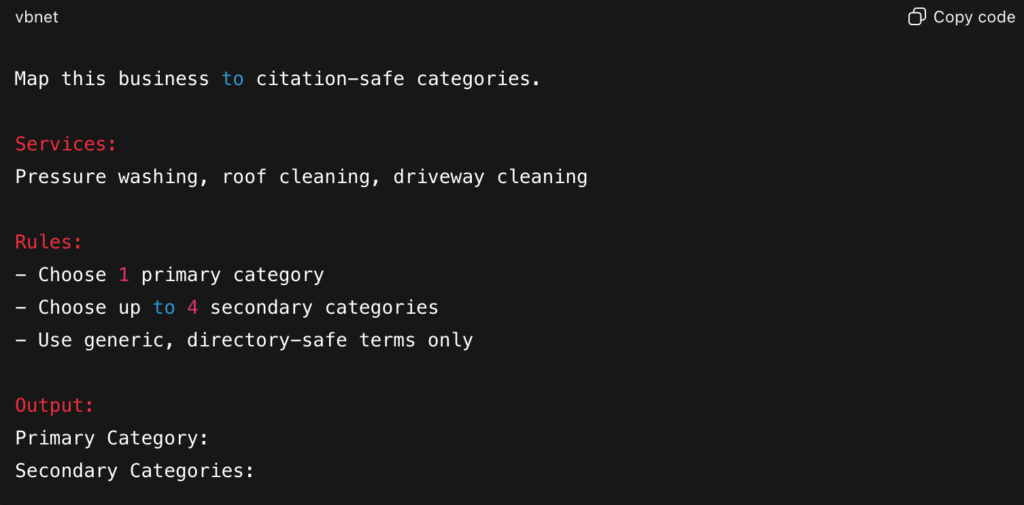

Category mapping across directories is one of those tasks that sounds simple until you’re doing it for fifty clients. Every platform uses different terminology. What Yelp calls one thing, Angi calls something slightly different. Doing this manually means inconsistent choices and, often, someone on the team defaulting to the most generic option every time just to get through it.

An LLM can take a service list and map it against likely directory categories quickly — not perfectly, but fast enough that a human reviewer can approve or adjust rather than starting from scratch. The difference between generating and reviewing is significant when you’re working at scale.

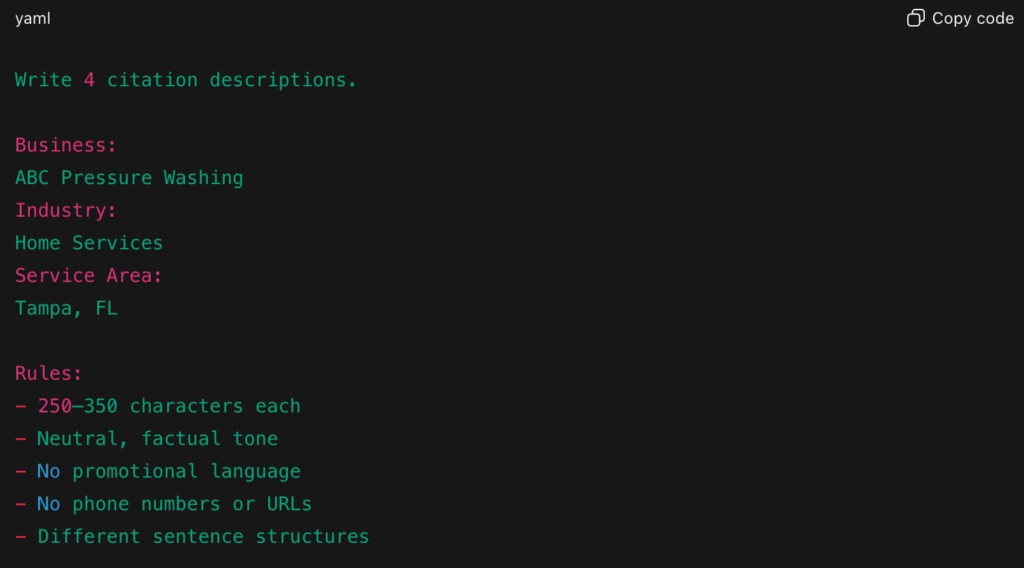

Descriptions are similar. The failure modes on both ends are well-documented: either every listing gets the exact same copy-pasted paragraph, or someone over-optimizes each one with city names and service terms until it reads like a doorway page. Neither is great. A smarter approach is writing a small set of neutral, informational variants — three or four — and rotating them. The LLM can draft those variants quickly. A human should read them before they go anywhere.

One thing worth noting: the descriptions that tend to age best are boring. Informational, not salesy. “This company provides X services in Y area” rather than “The BEST pressure washing in Tampa!” The latter might feel like it’s doing more work, but it accumulates risk over time.

Submission Is Still a Human Job

There’s no elegant way to say this. Automated directory submission creates problems. It has always created problems. The tools that promised to blast listings across hundreds of directories at once spent years generating duplicates, half-completed profiles, and data inconsistencies that took significant effort to unwind.

Manual submission — someone actually logging in, filling out the form, selecting categories, writing the description, verifying the listing — is slower and more expensive. It’s also more reliable and more compliant with how most directories actually want to be treated.

LLMs don’t change this calculation. They make the human submitter faster and less likely to make mistakes, because the data they’re working from is cleaner going in.

After It’s Live

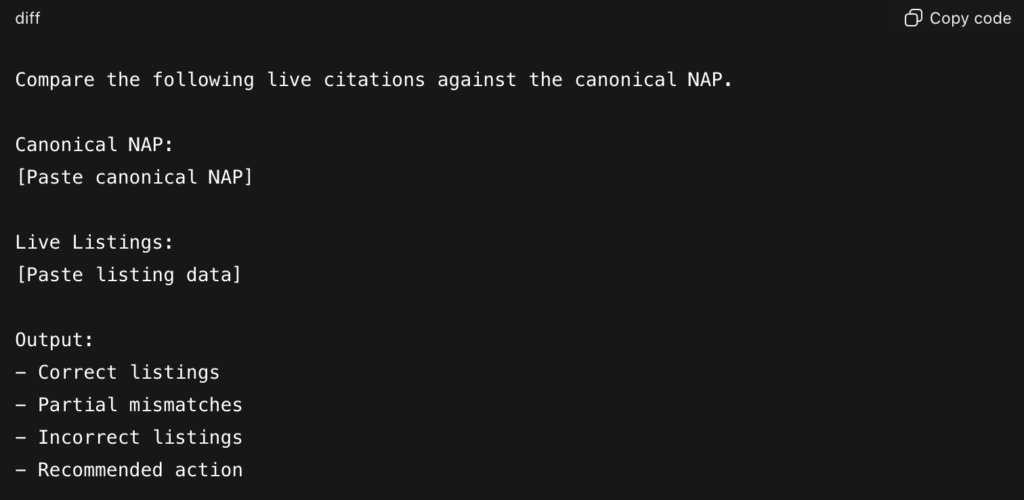

Post-submission QA tends to get skipped because it’s not billable in any obvious way. That’s a mistake. Listings drift. Old data resurfaces. A platform pulls information from somewhere unexpected and overwrites what you submitted.

Running periodic checks — pasting live listing data against the canonical NAP and asking an LLM to surface discrepancies — catches this stuff before it becomes a client problem. It’s not glamorous work but it’s the kind of maintenance that actually keeps a local presence clean over time.

For cleanup campaigns on older clients with citation histories stretching back years, this comparison step is especially useful. You can move through a large volume of existing listings quickly and flag only the ones that need human attention.

The broader point is this: LLMs are a multiplier for process quality, not a replacement for it. If your citation workflow is sloppy, they’ll help you produce sloppy results faster. If your workflow is solid — canonical data, human submission, real QA — they’ll help you scale that without introducing chaos.

That’s a real benefit. It’s just a quieter one than the marketing around these tools tends to suggest.